Support CleanTechnica’s work through a Substack subscription or on Stripe.

I have been seeing LinkedIn posts about Panthalassa’s wave-powered AI data-center concept recently, and the reaction they’ve been getting is familiar. Big funding round. AI power bottleneck. Ocean energy. No grid connection. No land constraint. Autonomous machines. A new category. It had all the ingredients of a story built to move fast through a feed. My reaction was not that waves do not contain energy, because of course they do. My reaction was that the system looked broken once it was treated as infrastructure rather than as a clever prototype.

When I saw Peter Thiel was leading the $140 million round, I was not surprised. That is not a comment on his intelligence or even on the legitimacy of venture capital taking high-risk shots, although both are worth considering. It is a reference-class observation. Thiel has been attracted before to ideas that try to route around the existing energy system with a bold new architecture, as if the reason the existing system is slow is mainly that incumbents lack imagination. That has worked in some software markets. In energy, slowness is often the visible surface of physics, weather, regulation, insurance, maintenance, capital discipline, and the unpleasant habit of machinery breaking when exposed to the real world.

Panthalassa is trying to solve a somewhat real problem. Proposed AI data centers are struggling to secure enough power quickly enough in places where grids, land, permitting, and interconnection are constrained. Its proposed solution is sloppy but compelling. Put the compute where the energy is. Use large autonomous ocean nodes to convert wave motion into electricity, use the electricity onboard for AI inference, and send the results back through satellite links. Avoid the subsea export cable. Avoid the grid connection. Avoid the land fight. Avoid waiting years for a utility-scale interconnection. That is a clean narrative. Sadly, the ocean does not care about clean narratives.

Panthalassa did not start as an AI data-center company. Its pre-AI focus was wave energy itself: converting open-ocean wave motion into low-cost electricity using autonomous floating nodes. The company describes itself as a public-benefit corporation building a planetary-scale clean energy platform, and outside profiles describe it as founded by Garth Sheldon-Coulson and Brian Moffat to unlock ocean wave power at terawatt scale. Its earlier work included Ocean-1, Ocean-2, and Wavehopper prototypes, with Ocean-2 described in the PRIMRE marine-energy database as an overtopping wave-energy converter in which the device’s bobbing motion forces water through an internal pipe and then through a turbine. The AI data-center framing appears to be the newer commercialization path: instead of trying to export electricity to shore through expensive subsea infrastructure, Panthalassa is now trying to put a flexible load, AI inference, directly on the wave-energy platform and send results back by satellite. That is a meaningful strategic pivot. It does not make the wave-energy problem disappear. It reframes the company from “how do we get wave electricity to market?” to “can we turn wave electricity into useful compute without surviving the grid?”

Wave energy has been one of clean energy’s longest-running never-success stories. The physics are attractive: waves are dense, visible, and predictable enough to tempt engineers into thinking the ocean is an obvious power plant. The operating history is less kind. Since the 1970s oil shocks, wave-energy developers have cycled through point absorbers, attenuators, oscillating water columns, overtopping devices, submerged pressure devices, flaps, rafts, snakes, buoys, and hydraulic systems. Almost every design looks elegant in tank tests, prototypes, and animations. Almost every design then meets the same reference class: saltwater, corrosion, fouling, fatigue, storms, survivability, installation cost, maintenance access, grid connection, and weak economics against wind and solar.

Pelamis was once the flagship wave-energy machine in Scotland and ended in administration. Aquamarine Power’s Oyster device was another high-profile Scottish contender and also failed commercially. Ocean Power Technologies spent decades trying to commercialize PowerBuoy systems and never became a large electricity supplier. Oscillating water column systems have worked as demonstrations, including Portugal’s Pico plant and Scotland’s Limpet, but not as a scalable power industry. The field has produced knowledge, components, test sites, and occasional niche devices, but not a mature generation class. I’ve written it off because decades of attempts have proven that first principles’ critiques are correct. It’s dead tech floating.

That history matters for Panthalassa because avoiding the grid connection does not erase the wave-energy reference class. It removes one historical failure mode while leaving the ocean’s core vetoes in place: the machine must survive, keep producing, avoid becoming a hazard, and be cheap enough to maintain.

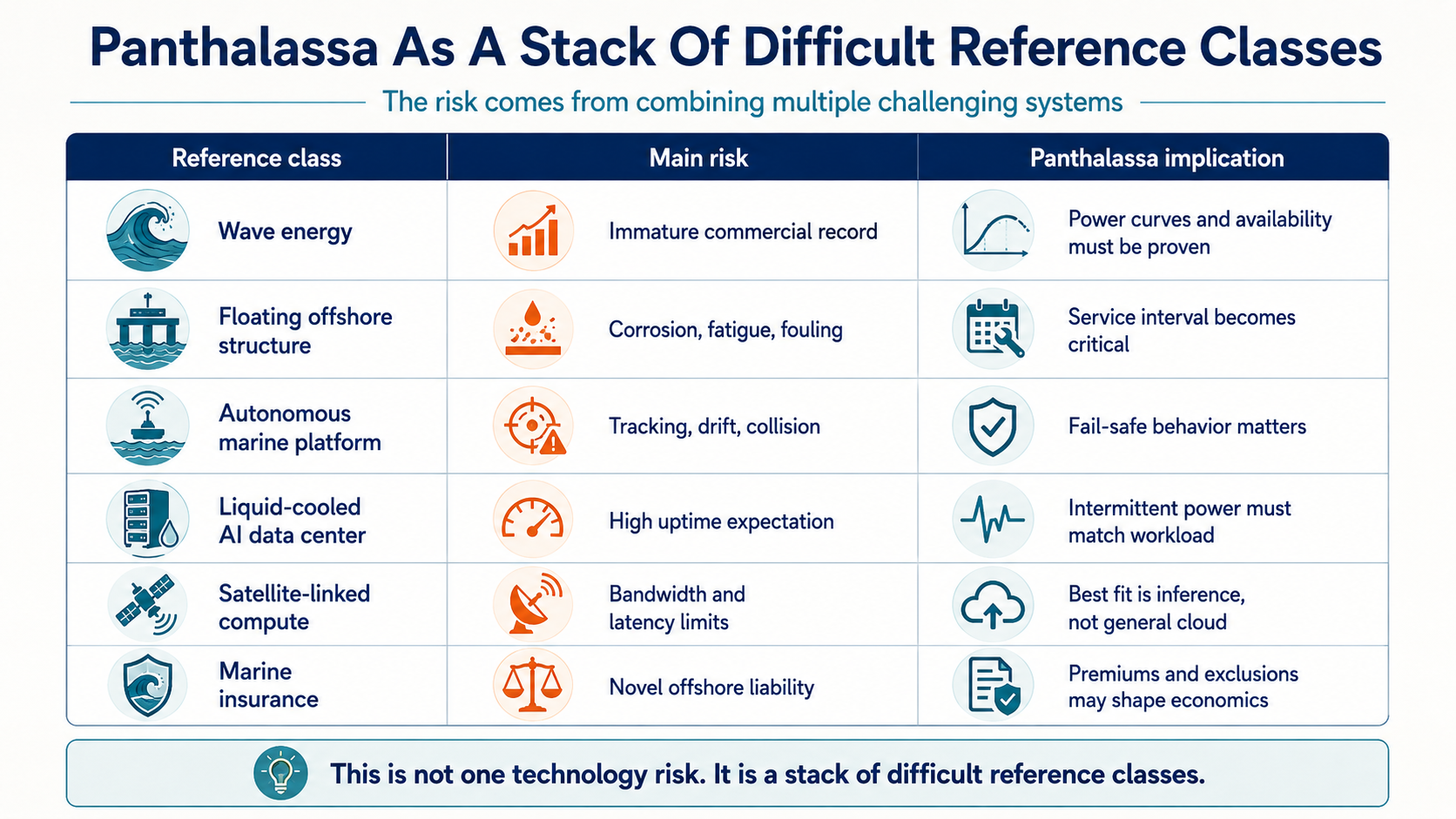

The key thing to understand is that Panthalassa is not one technology. It is a stack of hard technologies and harder operations. It is wave-energy conversion, floating offshore structure design, autonomous marine stationkeeping, corrosion control, biofouling management, seawater-adjacent cooling, high-density AI compute, satellite-linked networking, maritime safety, offshore maintenance, and insurance. Each one has its own reference class, and none of the difficult parts becomes easier because the pitch deck puts them in one box. In fact, stacking them compounds the risk.

The first category error is treating wave energy as if it were useful electricity just because the resource is large. Wave energy is real. Open-ocean waves carry meaningful power, often expressed in kW per meter of wave crest. A 2 meter significant wave height with a 10 second period has about 20 kW per meter. A 3 meter, 10 second sea is about 44 kW per meter. A 4 meter, 12 second sea can be around 94 kW per meter. Those are useful numbers, but they are not data-center numbers yet. A device has to capture some share of that moving water, convert it to mechanical work, pass it through turbines and generators, condition the power, buffer it, cool the compute, maintain communications, run controls and sensors, preserve safety functions, and hold some kind of position in the ocean. The useful AI output is the last number in the chain, not the first.

A kW in the wave is not a kW at the GPU. A kW at the turbine is not a kW of useful compute. A kW of peak compute is not a kW of reliable service. Every useful AI token has to survive an offshore loss stack before it reaches a customer. Intermittency takes a bite. Capture efficiency takes a bite. Turbine and generator efficiency take a bite. Power electronics and storage take a bite. Cooling takes a bite. Stationkeeping takes a bite. Communications and controls take a bite. Fouling and corrosion take repeated bites over time. This is not one efficiency number. It is a chain of efficiency, availability, and degradation terms multiplied together.

The 1 MW node question illustrates the problem. A 1 MW AI load is not an outrageous amount of compute in packaging terms. Modern high-density AI racks can run well above 100 kW per rack, so a megawatt-class compute module may be fewer than 10 racks. Fitting the electronics into an 85 meter marine structure is not the hardest part. Feeding those racks reliable power from waves is the hard part. A 1 MW net IT load likely needs something like 1.3 MW to 2 MW of average gross electrical output once cooling, power conversion, controls, communications, storage losses, and stationkeeping are included. If the wave-energy device has a 25% to 35% effective capacity factor, that implies several MW of gross rated capability to support 1 MW of average useful IT. That is a large claim for one autonomous first-generation wave-powered offshore machine.

It is also important to be fair about the compute fit. Panthalassa’s concept makes more sense for AI inference than for frontier model training or general cloud computing. Inference workloads can sometimes be shaped as small input, lots of computation, and small output. That can work better over satellite links than a tightly coupled training cluster where racks and GPUs need high-bandwidth, low-latency interconnection. This concession matters. The idea is not technically meaningless. There are workloads that are delay-tolerant, bandwidth-light, and geographically indifferent. But that narrows the claim. It makes the system a possible niche offshore inference platform. It does not make it a floating replacement for a land-based AI campus.

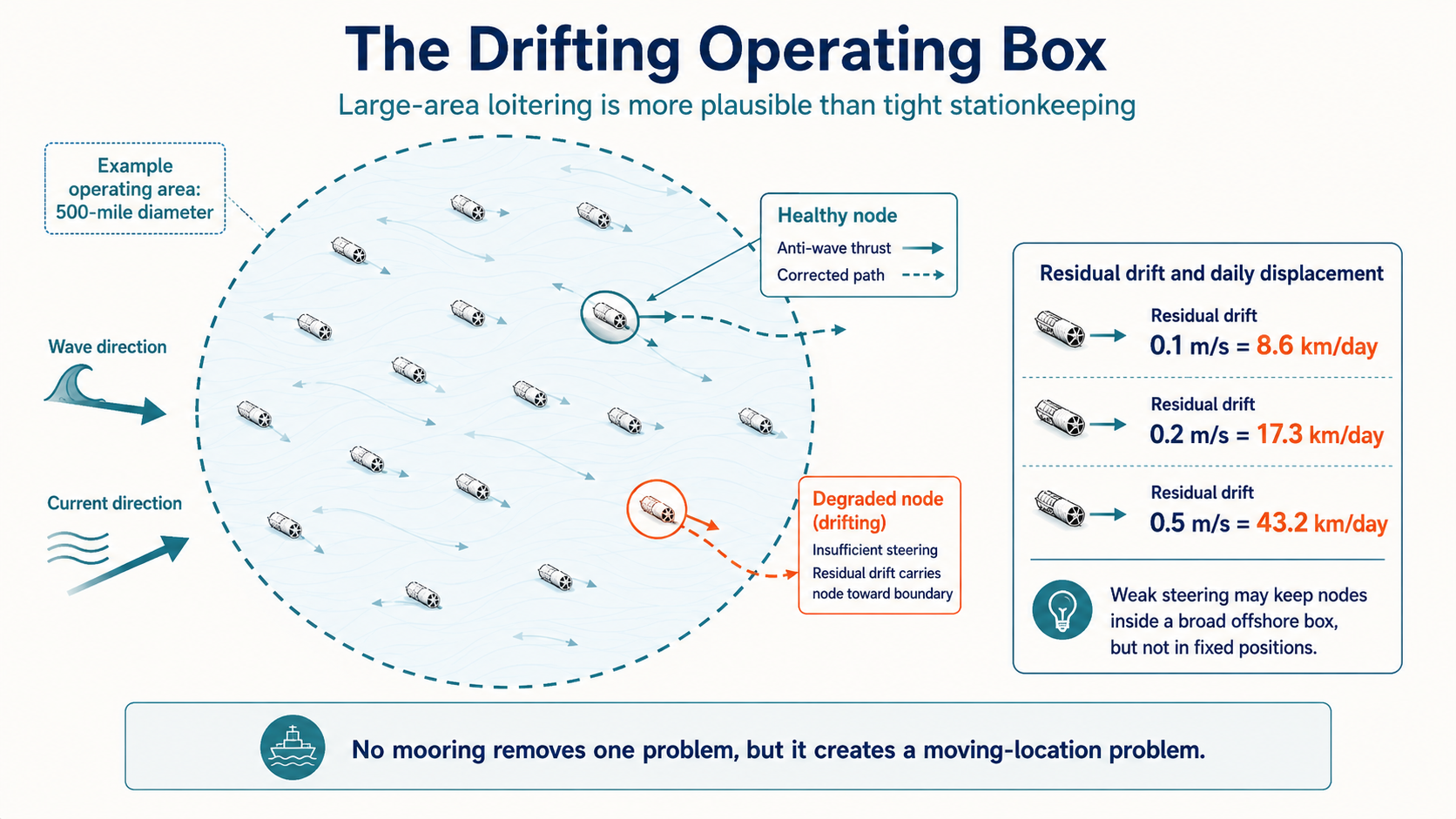

The next problem is stationkeeping. No mooring sounds like a win because moorings and dynamic subsea cables are two of the historic killers of wave-energy economics. But no mooring is a trade, not a free lunch. A moored machine has cable, anchor, and seabed problems. An unmoored machine has drift, tracking, collision, recovery, insurance, and regulatory problems. The public visuals and patent clues suggest something closer to a wave-powered drifter with slow hydrodynamic correction than a conventional autonomous vessel. It may push against waves. It may use body rotation and a Magnus-effect steering concept. It may bias drift over hours or days. What is not visible is high-authority stationkeeping.

The arithmetic is simple and unforgiving. A residual drift of 0.1 meters per second is 8.6 kilometers per day. A residual drift of 0.2 meters per second is 17.3 kilometers per day. A residual drift of 0.5 meters per second is 43.2 kilometers per day. Those are not storm numbers. They are ordinary order-of-magnitude ocean-drift numbers. If a node can mostly push along or against the wave axis, any cross-current remains a problem. If it loses steering but keeps communications, it becomes a moving recovery job. If it loses steering and communications, it becomes a hard-to-see maritime hazard.

Large-area loitering may be plausible. Tight stationkeeping is a different claim. A device that can stay somewhere inside a 500-mile-diameter ocean operating area is not holding position in the way offshore energy people usually mean it. A 500-mile diameter circle is about 805 kilometers across and covers roughly 508,000 square kilometers. That is an ocean region, not a project site. Spread 2,000 nodes across that area and the average spacing is roughly 16 kilometers. A node drifting 17 kilometers in a day has moved by about one fleet-spacing. If all units are healthy, tracked, and behaving as forecast, that may be manageable. If a fraction are degraded, fouled, or partially blind, the operating model changes from data-center operations to maritime fleet management.

The ocean then brings the maintenance stack. Salt spray attacks antennas, hatches, cable glands, sensors, lights, fasteners, panels, brackets, and grilles. The splash zone is worse than ordinary atmospheric exposure because surfaces are wetted, dried, re-wetted, and left with concentrated chlorides. Cathodic protection helps submerged steel. It does not protect every spray-wetted topside cable gland, hatch seam, sensor bracket, or electronics enclosure. Biofouling grows on openings, grilles, heat exchangers, hull surfaces, and any sheltered wet structure. It changes drag, mass, flow, cooling performance, and hydrodynamic symmetry. Wave loads create millions of cycles per year. At a 10 second period, there are about 3.15 million cycles per year before counting chop, storms, towing loads, and vibration.

The failure modes are not exotic. They are familiar marine failure modes. Coatings degrade. Crevices corrode. Mixed metals create galvanic couples. Grilles block. Valves stick. Flow paths foul. Bearings vibrate. Seals age. Cable glands leak. Sensors drift. Satellite antennas degrade. Navigation lights fail. Batteries lose reserve. Heat exchangers lose performance. Cooling pumps degrade. Software sees bad data from corroded or fouled instruments. Fishing gear snags protrusions. Storms damage the most exposed details. A land data-center rack failure leaves the rack in a building.

An ocean node failure may leave a partly submerged, poorly controllable, high-value steel object moving with waves and currents. Thousands of dead, massive, mostly submerged, steel structures floating in the ocean’s current are recreating the conditions for the Titanic.

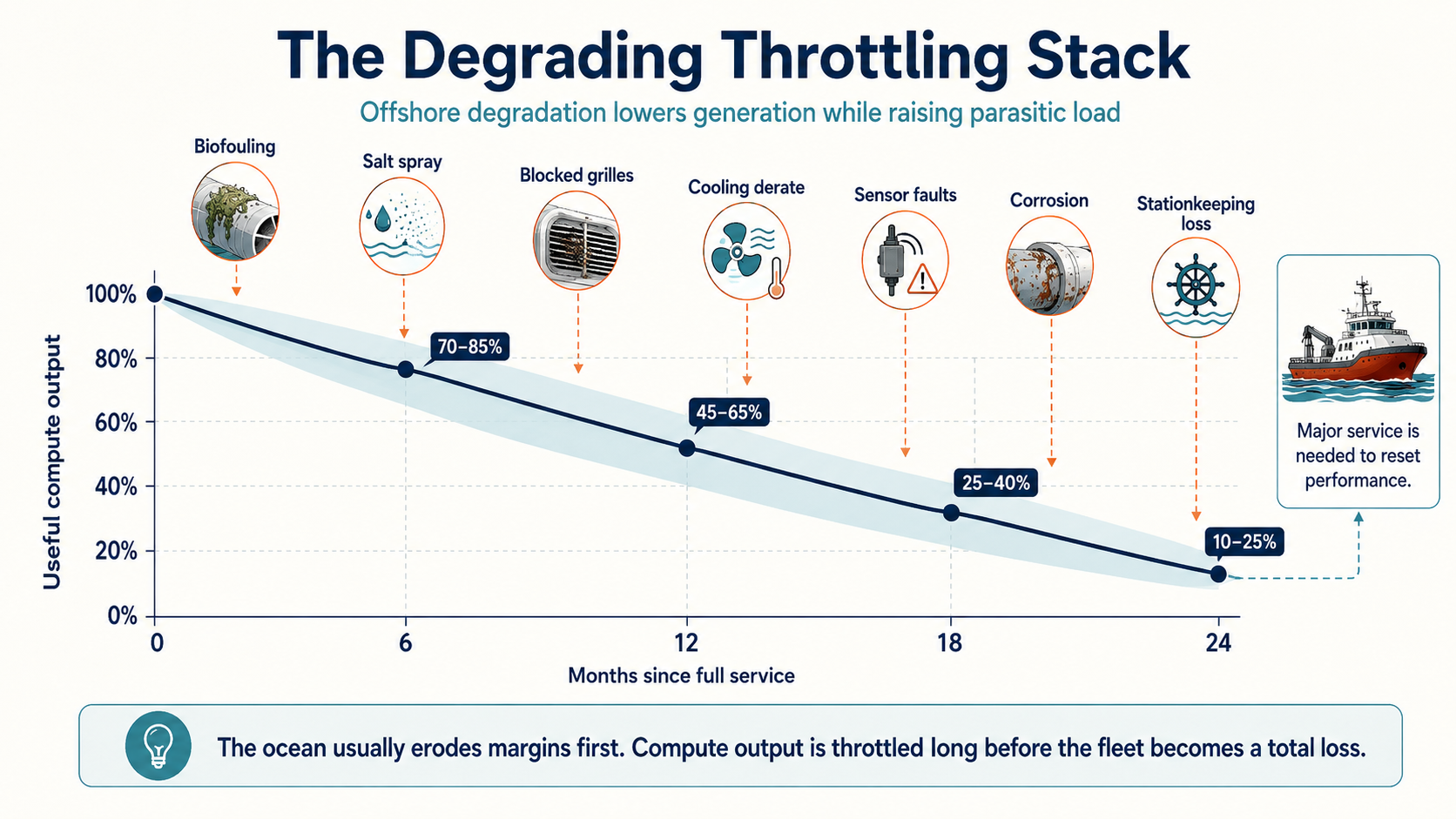

This is why I would model Panthalassa as a degrading throttling stack. The ocean usually does not break everything at once. It erodes margins. A little more drag. A little less flow. A slightly fouled heat exchanger. A partly blocked grille. A sensor with salt intrusion. A valve that no longer responds cleanly. A battery with less reserve. The compute load is then the thing that gets throttled because the platform must preserve survival functions: lights, communications, navigation, control, and recovery mode. In a land data center, a server issue is a maintenance ticket. In this system, the same class of issue can become a vessel dispatch.

The degradation curve is likely not a neat 2% annual loss like a solar panel. Without measured long-duration open-ocean data, a reference-class estimate would be ugly. After a few clean months, performance may look fine. After six months, fouling, salt deposits, sensor issues, and flow losses could cut useful output to perhaps 70% to 85% of clean performance. After 12 months, blocked grilles, cooling derate, valve issues, and stationkeeping degradation could leave 45% to 65%. After 18 months, corrosion defects, fouling-driven drag, fatigue hot spots, and more frequent faults could leave 25% to 40%. After 24 months without major service, a 10% to 25% useful-output range is not unreasonable. The unit may still float. It may still produce something. It may no longer be a bankable compute asset.

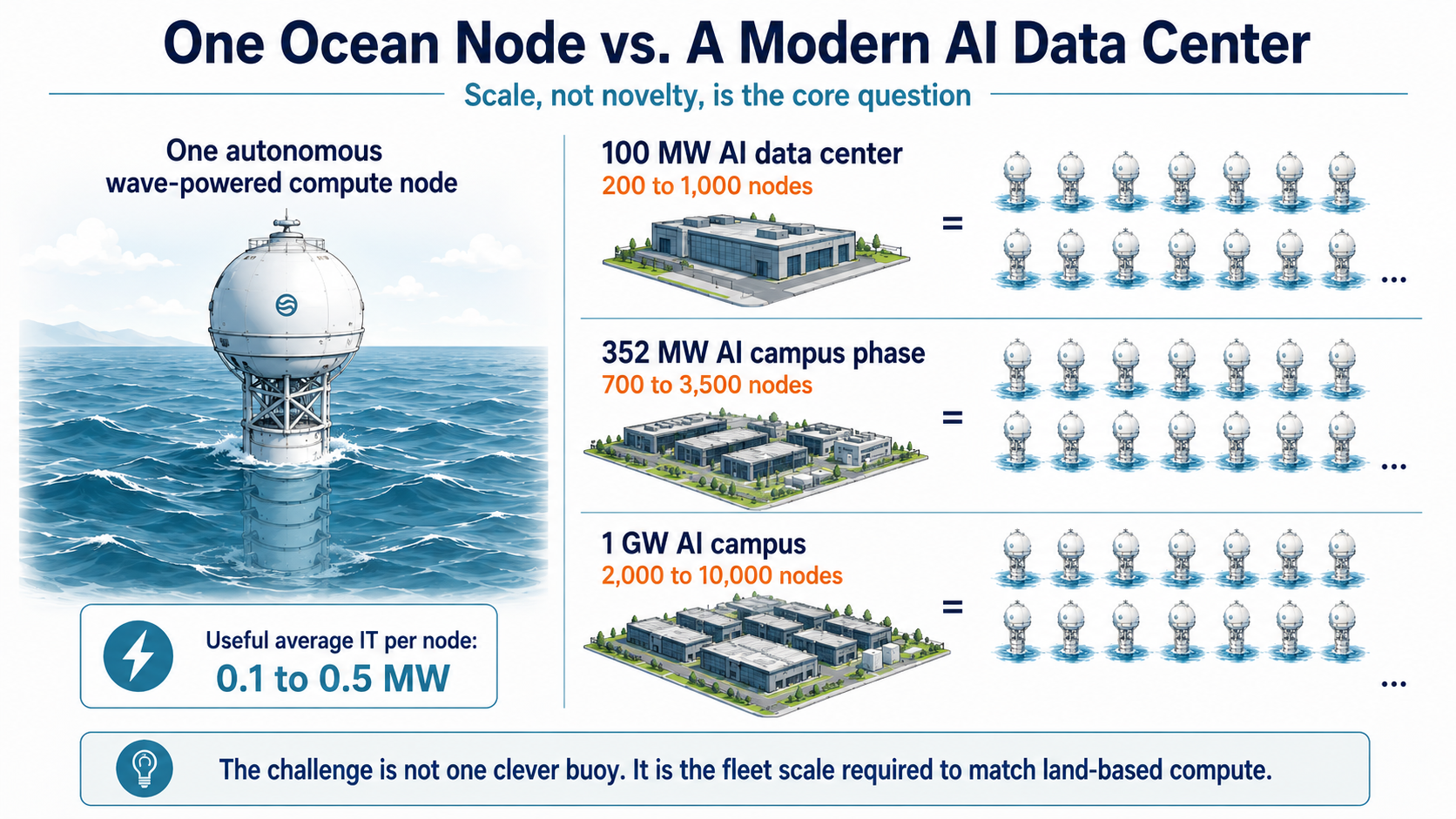

Then the scale problem arrives. A modern AI data center is not 5 MW. A smaller serious facility can be 100 MW of IT load. A large current phase can be around 350 MW. A major AI campus can be 1 GW. If an ocean node averages 0.5 MW of useful IT, which is optimistic, a 100 MW facility requires 200 nodes. A 350 MW facility requires 700. A 1 GW campus requires 2,000. If the node averages 0.25 MW, a base-case number I find easier to defend, those figures double to 400, 1,400, and 4,000. If early-commercial performance is 0.1 MW per node, they become 1,000, 3,500, and 10,000 nodes.

That is before spares, failed units, units in service, units in transit, and maintenance reserve. Add 25% to 50% for operational reality and the fleet count grows again. The investment story is not one clever buoy. It is hundreds to thousands of autonomous offshore industrial objects that all have to remain productive, visible, recoverable, insurable, and useful. That is a very different proposition from a prototype video.

The maintenance arithmetic is where the system begins to look visibly broken. Suppose a 350 MW-equivalent fleet requires 1,400 productive nodes and perhaps 2,000 nodes including spares and maintenance reserve. Annual service alone means 2,000 divided by 365, or 5.5 planned services per calendar day. If only 60% of days are workable because of weather windows, that becomes about nine planned services per workable day. Add unplanned interventions and the number can move into the 15 to 25 per workable day range. If the service interval is six months rather than annual, double it. If the fleet is aiming at 1 GW, multiply again.

Tow-back maintenance makes the arithmetic worse. If a node has to be towed hundreds of kilometers to port for meaningful service, each event can consume days of vessel time before repair even begins. A routine tow-back model for thousands of 85 meter offshore machines is not a data-center O&M model. It is an offshore logistics industry. The only plausible maintenance model is fast at-sea diagnostics, cleaning, modular replacement, remote reset, and rare retrieval. That requires dedicated service vessels, spares, trained crews, weather-window planning, fleet-routing software, and recovery capacity for disabled units. Maintenance is not a line item in this concept. Maintenance is the business model.

Insurance will not treat this like a server farm. It combines novel offshore energy machinery, autonomous drifting objects, collision exposure, storm risk, salvage risk, environmental cleanup risk, high-value electronics, business interruption, satellite dependence, and fleet software risk. A single pilot can be insured or self-insured under special terms. A fleet of thousands cannot simply be waved through. Insurers will ask what happens after loss of generation, loss of steering, loss of communications, partial flooding, a collision, fishing-gear entanglement, or a storm that damages many nodes at once. The premium is not paperwork. It is the market’s priced view of physical risk.

This brings us to the $0.02/kWh claim that has circulated around Panthalassa. It fails a basic smell test when applied to useful compute. If a node delivered 0.5 MW of average useful IT, its annual output would be 0.5 MW multiplied by 8,760 hours, or 4,380 MWh. At $0.02/kWh, that electricity is worth $87,600 per year. That annual amount has to cover capital recovery, offshore maintenance, insurance, spares, monitoring, service vessels, failures, retrieval, and operations. That is not plausible for an 85 meter autonomous offshore machine. Even at 2 MW average useful output, the annual 2-cent electricity budget is only $350,400. That may not cover much offshore vessel time, much less capital recovery.

The problem is not that wave energy is impossible. The problem is that offshore ownership is not free. If the actual all-in cost of useful IT power is $0.50/kWh, $1/kWh, or several dollars per kWh after maintenance, insurance, downtime, and parasitics, the compute economics change. High-end GPUs are expensive capital assets. They need high utilization to make sense. If wave conditions throttle the node, if service intervals pull nodes offline, if satellite links narrow the workload pool, and if marine O&M drives up energy cost, the GPU-hour becomes expensive quickly. Customers do not buy romantic ocean energy. They buy useful compute at a reliable price.

This is where Thiel’s involvement matters, but not as proof of anything by itself. I have written before about Thiel, LightSail, and the broader mistake of applying software disruption logic to energy. LightSail was a compressed-air storage company with smart people, a clever technical story, and a Silicon Valley energy-disruption frame. It ran into the usual energy problems: thermodynamics, capital cost, market fit, deployment reality, and the fact that the grid is not the internet. Panthalassa is not LightSail. It is more ambitious. But the pattern rhymes. Find a real bottleneck. Frame incumbents as slow. Propose a radical bypass. Underweight operations.

A venture investor can rationally fund a high-risk prototype. AI power demand is real. Interconnection queues are real. Wave energy is vast. Inference workloads can be flexible. Avoiding export cables is clever. A successful node could create useful IP in marine autonomy, power electronics, cooling, corrosion management, or wave-energy conversion even if the data-center thesis fails. Venture capital can tolerate many failures for one extreme upside case. Infrastructure finance cannot. Hyperscalers, insurers, lenders, regulators, and maritime authorities need repeatable, safe, serviced, costed operations.

The evidence that would change the assessment is straightforward. Show measured net electrical bus power over months in real ocean conditions. Show useful IT power after parasitic loads. Show stationkeeping energy and drift envelope in real current and wave fields. Show failure response after loss of generation, communications, navigation, and steering. Show biofouling, corrosion, and cooling performance after 6, 12, and 24 months. Show at-sea service time per node. Show insurance terms. Show workload economics over satellite links. Show multi-node fleet control. Show a costed path to hundreds or thousands of nodes. That is the bar between an interesting prototype and investable infrastructure.

My take on venture capitalism in the United States, with or without Thiel, is that it has turned into a system which enables a lot of people to make a lot of money without delivering anything remotely valuable to the economy. It’s been entirely financialized. The floating nonsense of wave-generation AI inference is just more evidence of that. Zero technical or operational due diligence was done. This is all narrative.

Panthalassa is interesting because it responds to a problem most consider real and tries to avoid genuine bottlenecks. It is not interesting because it has proven a new class of data center. The public case still looks like a stack of hard assumptions presented as a clean bypass. The LinkedIn posts were compelling because the story is compelling. My skepticism came from the arithmetic. Thiel’s backing made sense because the concept fits a long pattern of his weak energy-disruption thinking. The final question is not whether waves contain energy. They do. The question is whether that energy can survive corrosion, fouling, stationkeeping, insurance, maintenance, satellite limits, and fleet logistics cheaply enough to become useful AI compute. That is the claim. That is also the part that still looks broken.

Sign up for CleanTechnica’s Weekly Substack for Zach and Scott’s in-depth analyses and high level summaries, sign up for our daily newsletter, and follow us on Google News!

Have a tip for CleanTechnica? Want to advertise? Want to suggest a guest for our CleanTech Talk podcast? Contact us here.

Sign up for our daily newsletter for 15 new cleantech stories a day. Or sign up for our weekly one on top stories of the week if daily is too frequent.

CleanTechnica uses affiliate links. See our policy here.

CleanTechnica’s Comment Policy